Hi

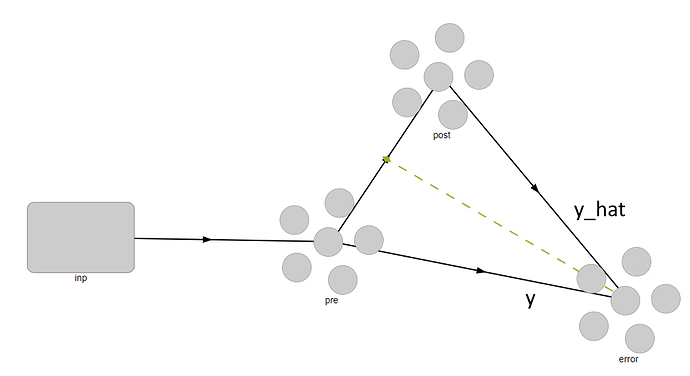

Does someone know how to get output (y) and estimated output (y_hat) in make_step function when we are implementing a learning rule.

You can project whatever signals you like into your learning rule and use them there. For example:

conn = nengo.Connection(pre, post, learning_rule_type=MyLearningRule(), ...)

nengo.Connection(y, conn.learning_rule[:y.dimensions], synapse=synapse)

nengo.Connection(y_hat, conn.learning_rule[y.dimensions:], synapse=synapse)

In defining MyLearningRule, the rule.size_in would need to be equal to the number of dimensions for y plus the number of dimensions for y_hat.

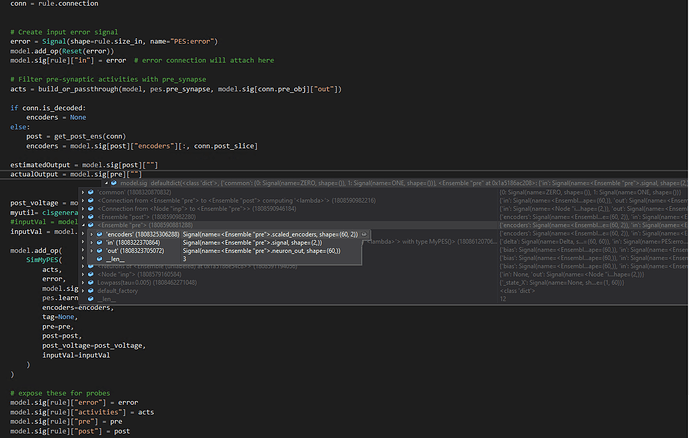

You can then refer to how we use this within PES:

For the PES learning rule we only need an error signal (y_hat - y; see example), but you have the freedom to name this signal whatever. It will contain any information that you can project using Nengo connections, and then you can use that signal within your learning rule implementation however you please. The following shows how we use the error within the PES implementation, for reference:

If you have some follow-up questions please let us know what code you have tried or where you are having difficulties and what problem you are seeing. Thanks, and hope this helps!

Hi @arvoelke ,Thank you so much for the reply. Nevertheless, I’m not quite clear on a few things !!

I have used a simple example to understand this, and I’m looking to use the actual output (y_hat) and membrane potential of the specific ensemble in the “make_step” function.

First, I have added my learning rule and a prob in the main file NengoTest/PES_sample.py at 1f4d2280f37dadce0732357ff1c344e9b889844f · hamed-Modiri/NengoTest · GitHub

Next, instances of Signal have been defined in NengoTest/MyLearning.py at 1be0d5e60b7b0883836437116fb0daca1951d1ce · hamed-Modiri/NengoTest · GitHub

Finally, signals have been used in “make_setp” NengoTest/MyLearning.py at afec4c1998c90e45c860fa05318deddfd5c320d0 · hamed-Modiri/NengoTest · GitHub

But I have got exceptions on all three lines of code:

pre = signals[self.pre]

post = signals[self.post]

mempotential = signals[self.post][“voltage”]

Could you please clarify this for me?

I really appreciate your help

In the future, it might help to reduce the code that you have to the smallest amount of code required to trigger the problem you are seeing. This also makes it easier for us to give corrections.

I took your code and modified it so that it would run without any errors. Here’s the steps you can follow to get it running. However, I’m not sure if it does what you need or want in the end because I didn’t review the rest of the code.

-

The voltage signal for the post ensemble can be accessed like so inside of

build_mypes:post_voltage = model.sig[conn.post_obj.neurons]["voltage"]This is assuming the

postobject is an ensemble (as it is in your code). This signal is then passed toSimMyPES, and handled as shown in the following steps.By the way, I noticed that you are creating new signals for

preandpost. I don’t think this is what you want to be doing. You likely want to be grabbing the existing signals frommodel.sig, like I did above. -

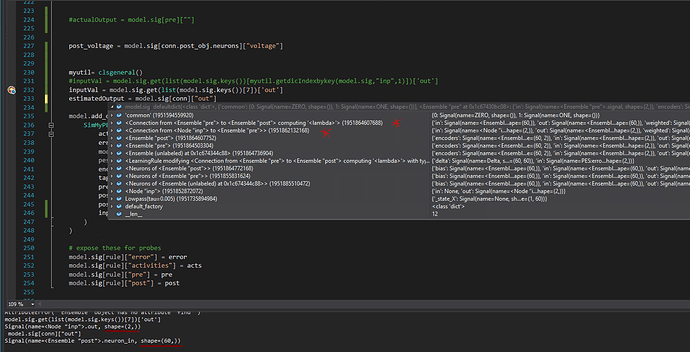

In order to be able to access

signals[self.pre]andsignals[self.post]they need to be included in the operator’sreadsarray. This is how the builder knows how to organize the computational graph. The same applies to yourpost_voltagesignal. So in the end this looks like:self.reads = [pre_filtered, error, pre, post, post_voltage] + ([] if encoders is None else [encoders]) ... @property def pre(self): return self.reads[2] @property def post(self): return self.reads[3] @property def post_voltage(self): return self.reads[4] ... def make_step(self, signals, dt, rng): ... pre = signals[self.pre] post = signals[self.post] mempotential = signals[self.post_voltage]You can also remove all references to

pre_filtered,error, andencoders, here, if you aren’t using them. -

In

PES_sample.py, yourweights_inputis attempting to probe the weights ofconninput, which you can’t do becauseconninputdoesn’t have weights (that connection is directly injecting current from an input node into thepreensemble). And so I also had to comment that line out inPES_sample.pyto get it to run.

Unfortunately i can’t find right signal which represent real value of actual and estimated output. I would be grateful if you guided me through this.

When you say “real value of actual and estimated output”, what values are you looking for? The neural activities from the pre-synaptic population and the decoded value of those activities for some function you are specifying? Or something else?

I mean any values that are used to calculate the error. In this example, decoded values of “post” and “pre” populations.

The decoded values of the pre and post populations can be found in model.sig[conn0]["out"] and model.sig[conn1]["out"], where conn0 is the connection from pre to error and conn1 is the connection from post to error.

But note that rather than trying to directly access the values of Signals outside your learning rule, you are probably better off connecting pre/post up to the learning rule, and then just using the value of the learning rule input signal. That is, you should use the model’s connection structure to ensure that you are delivering whatever information you need to the learning rule, and then your learning rule implementation would just use that information (rather than the learning rule implementation itself trying to draw information from various places in your model).

Thank you for quick reply. But i can’t find those connections in the “model.sig” object and the current connection has different shape (#neurons,) from what i expect.

I don’t see any “error” population in your model, unlike the diagram above, or any connections to either an “error” population or to your learning rule. Are you sure those connections exist in your Network? I.e. do you have a line that looks like nengo.Connection(pre, error), or nengo.Connection(pre, conn.learning_rule)?

What shape does it have, and what shape do you expect it to have?

you are right. i have been using PES template as learning rule hence the error population is added by that, which should be ignored. so, i need the decoded value of the “post” as y_hat.

in this sample i expect (2,1) vector

If you only need the decoded value of post, then you would just connect post to your learning rule (like nengo.Connection(post, conn.learning_rule), and then the decoded value of post would be available in model.sig[rule]["in"].

As I mentioned in the second post, this method didn’t work on my code. I mean, there was no model.sig[rule][“in”] signal in the model.

would you please share error calculation script inside the make_step function in a simple example.

You need to create the input signal yourself as part of the build process (which you were doing in the code snippet you posted here, under the section #Create input error signal).