Good time of day,

I’m trying to learn dynamical systems that saturate value and when it goes to 0 resets. This should be a continuous process. I tried to use more neurons and play with synapse and radius, but it doesn’t work. Am I missing something?

Here is snipped that reproduce behavior

import nengo

import numpy as np

import matplotlib.pyplot as plt

tau = 0.01

def feedback(x):

x = x + 0.0005

if x > 1:

return 0

return x

model = nengo.Network(seed=42)

with model:

state = nengo.Ensemble(2000, 1, radius=np.sqrt(2))

nengo.Connection(state, state, function=feedback, synapse=tau)

state_probe = nengo.Probe(state, synapse=tau)

with nengo.Simulator(model) as sim:

sim.run(5)

samples = len(sim.data[state_probe])

x = sim.data[state_probe][0]

points = [x]

for i in range(samples-1):

x = feedback(x)

points.append(x)

points = np.array(points)

plt.plot(range(samples), sim.data[state_probe], label="x1", alpha=0.8)

plt.plot(range(samples), points, color="r")

plt.show()

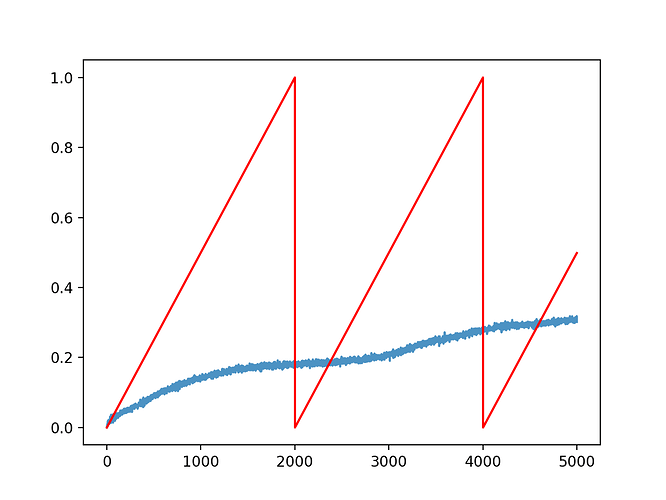

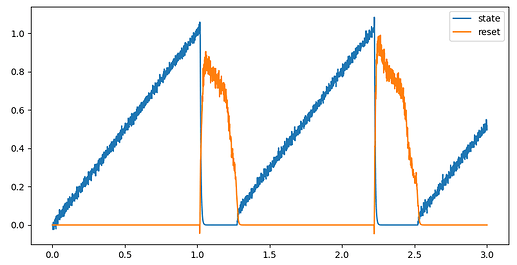

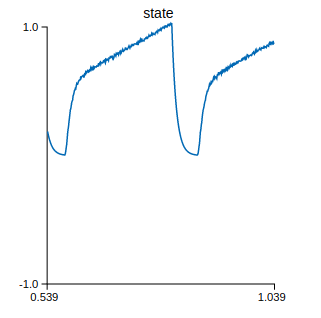

Resulted plot

Basically I want blue line match red line

Thank you for any input